Over the last two decades, geological modeling has undergone a revolution, albeit not a flashy one. What once required painstaking work using cross-sections and block models is now possible using implicit modeling engines, stochastic simulations, and machine learning-based lithological interpretation tools. As the capabilities of these programs increase, the question we must pose is: are we building models for the mine, or models for conferences?

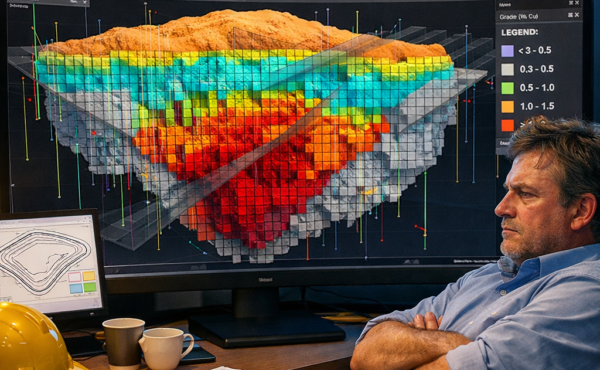

With modern tools such as Leapfrog, Datamine, and Vulcan, even the most complex geostatistical tools are now at our fingertips. No longer is variogram modeling, conditional simulation, and multivariate domain analysis the sole domain of consultants and experts. For mines with high nugget effects, or those with long mine lives and high capital costs, this added layer of complexity is justified. For example, in a gold deposit, the irregular distribution of grade requires meticulous simulation to avoid misallocations in mill feed.

Regulatory guidelines such as the JORC and NI 43-101 standards also promote more robust and documented methodologies, which again, in turn, drives the need for more complex models. After all, in a world where investor confidence and capital spend are at stake, completeness is not a nicety but a necessity.

However, the problem we face is that sometimes this added model complexity is not justified by the data and planning cycle. What may take months to build, due to the hundreds of lithological domains, complex nested models, and multivariate co-simulations, may arrive at the mine just as the decisions we built the model for are no longer valid.

But there’s another, deeper threat lurking in the background: the old problem of “garbage in, gospel out.” The smooth model, based on weak drill information, may produce false confidence in its outputs, which may be accepted by engineers and planners who are unaware of the underlying method and its limitations, and may over-rely on the outputs, which may be treated like certainties instead of probability distributions. More complexity does not necessarily reduce the risk but may merely mask the problem.

The antidote to this problem is not to adopt simplistic models, but to tune the models to the problem. Briefly stated, the complexity of the model will vary depending on the quantity and quality of the data, the nature of the deposit, and the nature of the decision to be made. For example, a scoping study model will be different from a pre-feasibility study model, which will be different from a short-term planning model.

Finally, the gap between the models and the reality must be closed by cooperation between different professionals, such as geologists, mine planners, and metallurgists, who must all be involved in the development of the model from the beginning to ensure that the outputs will be relevant to the questions that need to be answered. The process of reconciliation, which is part of the production process, provides the feedback to ground the advanced models in reality.

Modern geological models are neither too complex nor too simple; they are misfit models that are created without a clear purpose in mind. The most effective model is not necessarily the most sophisticated, but the one that will be most useful to inform the next important decision to be made. Before reaching for the plethora of modeling tools that are available, the mine planner must first ask the deceptively simple question: What decision does this model need to inform? This, and not the complex algorithm, is the foundation of mine planning.